FILTER TRANSFORMATION

Example: to filter records where SAL>2000

Create Session and Workflow as described earlier. Run the workflow and see the data in target table.

How to filter out rows with null values?

To filter out rows containing null values or spaces, use the ISNULL and IS_SPACES Functions to test the value of the port. For example, if we want to filter out rows that Contain NULLs in the FIRST_NAME port, use the following condition:

IIF (ISNULL (FIRST_NAME), FALSE, TRUE)

This condition states that if the FIRST_NAME port is NULL, the return value is FALSE and the row should be discarded. Otherwise, the row passes through to the next Transformation.

Performance tuning:

Filter transformation is used to filter off unwanted fields based on conditions we Specify.

Example: If we want to keep employees of France, India, US in 3 different tables, then we can use 3 Filter transformations or 1 Router transformation.

Mapping A uses three Filter transformations while Mapping B produces the same result with one Router transformation.

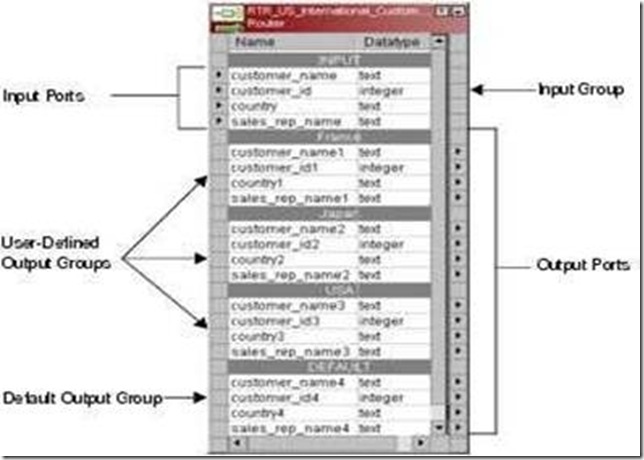

A Router transformation consists of input and output groups, input and output ports, group filter conditions, and properties that we configure in the Designer.

Working with Groups

A Router transformation has the following types of groups:

The Default Group: The Designer creates the default group after we create one new user-defined group. The Designer does not allow us to edit or delete the default group. This group does not have a group filter condition associated with it. If all of the conditions evaluate to FALSE, the IS passes the row to the default group.

Example: Filtering employees of Department 10 to EMP_10, Department 20 to EMP_20 and rest to EMP_REST

1. Open folder where we want to create the mapping.

2. Click Tools -> Mapping Designer.

3. Click Mapping-> Create-> Give mapping name. Ex: m_router_example

4. Drag EMP from source in mapping.

5. Click Transformation -> Create -> Select Router from list. Give name and

Click Create. Now click done.

6. Pass ports from SQ_EMP to Router Transformation.

7. Edit Router Transformation. Go to Groups Tab

8. Click the Groups tab, and then click the Add button to create a user-defined Group. The default group is created automatically..

9. Click the Group Filter Condition field to open the Expression Editor.

10. Enter a group filter condition. Ex: DEPTNO=10

11. Click Validate to check the syntax of the conditions you entered.

12. Create another group for EMP_20. Condition: DEPTNO=20

13. The rest of the records not matching the above two conditions will be passed to DEFAULT group. See sample mapping

14. Click OK -> Click Apply -> Click Ok.

15. Now connect the ports from router to target tables.

16. Click Mapping -> Validate

17. Repository -> Save

Difference between Router and Filter :

We cannot pass rejected data forward in filter but we can pass it in router. Rejected data is in Default Group of router.

============================================================

SORTER TRANSFORMATION

The Sorter transformation contains only input/output ports. All data passing through the Sorter transformation is sorted according to a sort key. The sort key is one or more ports that we want to use as the sort criteria.

Sorter Transformation Properties

1. Sorter Cache Size:

The Power Center Server uses the Sorter Cache Size property to determine the maximum amount of memory it can allocate to perform the sort operation. The Power Center Server passes all incoming data into the Sorter transformation Before it performs the sort operation.

The Case Sensitive property determines whether the Power Center Server considers case when sorting data. When we enable the Case Sensitive property, the Power Center Server sorts uppercase characters higher than lowercase characters.

3. Work Directory

Directory Power Center Server uses to create temporary files while it sorts data.

4. Distinct:

Check this option if we want to remove duplicates. Sorter will sort data according to all the ports when it is selected.

Example: Sorting data of EMP by ENAME

1. Open folder where we want to create the mapping.

2. Click Tools -> Mapping Designer.

3. Click Mapping-> Create-> Give mapping name. Ex: m_sorter_example

4. Drag EMP from source in mapping.

5. Click Transformation -> Create -> Select Sorter from list. Give name and click Create. Now click done.

6. Pass ports from SQ_EMP to Sorter Transformation.

7. Edit Sorter Transformation. Go to Ports Tab

8. Select ENAME as sort key. CHECK mark on KEY in front of ENAME.

9. Click Properties Tab and Select Properties as needed.

10. Click Apply -> Ok.

11. Drag target table now.

12. Connect the output ports from Sorter to target table.

13. Click Mapping -> Validate

14. Repository -> Save

![clip_image002[5] clip_image002[5]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEhRW_4rfTV7LxWHpf8WCb3xRGfiP_4SDcVE4V4zZOUO_eERk1VNoBzgN298Yxgc4iZMxPPm6K9TVRX6yse1CjyRlR-hgb8wwVtVjgxb32JCLEutQ_i5F04WEUj9vUMQblyAQ1DN3BjH6b4R/?imgmax=800)

Performance Tuning:

Sorter transformation is used to sort the input data.

During the session, the Power Center Server caches input data until it can perform The rank calculations.

Rank Transformation Properties :

Rank Index

The Designer automatically creates a RANKINDEX port for each Rank transformation. The Power Center Server uses the Rank Index port to store the ranking position for Each row in a group.

For example, if we create a Rank transformation that ranks the top five salaried employees, the rank index numbers the employees from 1 to 5.

Rank transformation allows us to group information. For example: If we want to select the top 3 salaried employees of each Department, we can define a group for Department.

RANK CACHE

Sample Rank Mapping

When the Power Center Server runs a session with a Rank transformation, it compares an input row with rows in the data cache. If the input row out-ranks a Stored row, the Power Center Server replaces the stored row with the input row.

Example: Power Center caches the first 5 rows if we are finding top 5 salaried Employees. When 6th row is read, it compares it with 5 rows in cache and places it in Cache is needed.

1) RANK INDEX CACHE:

The index cache holds group information from the group by ports. If we are Using Group By on DEPTNO, then this cache stores values 10, 20, 30 etc.

It holds row data until the Power Center Server completes the ranking and is Generally larger than the index cache. To reduce the data cache size, connect Only the necessary input/output ports to subsequent transformations.

- Active and connected transformation.

Example: to filter records where SAL>2000

- Import the source table EMP in Shared folder. If it is already there, then don’t Import.

- In shared folder, create the target table Filter_Example. Keep all fields as in EMP table.

- Create the necessary shortcuts in the folder.

- Open folder where we want to create the mapping.

- Click Tools -> Mapping Designer.

- Click Mapping -> Create -> Give mapping name. Ex: m_filter_example

- Drag EMP from source in mapping.

- Click Transformation -> Create -> Select Filter from list. Give name and click Create. Now click done.

- Pass ports from SQ_EMP to Filter Transformation.

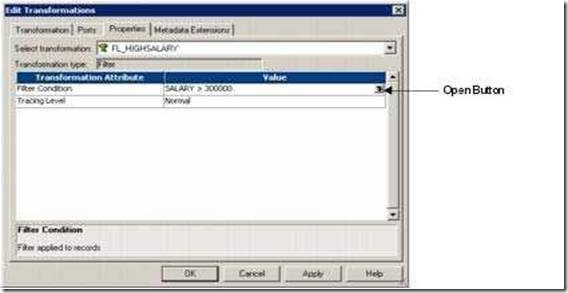

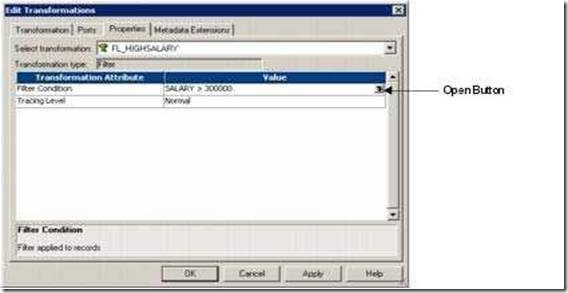

- Edit Filter Transformation. Go to Properties Tab

- Click the Value section of the Filter condition, and then click the Open button.

- The Expression Editor appears.

- Enter the filter condition you want to apply.

- Click Validate to check the syntax of the conditions you entered.

- Click OK -> Click Apply -> Click Ok.

- Now connect the ports from Filter to target table.

- Click Mapping -> Validate

- Repository -> Save

Create Session and Workflow as described earlier. Run the workflow and see the data in target table.

How to filter out rows with null values?

To filter out rows containing null values or spaces, use the ISNULL and IS_SPACES Functions to test the value of the port. For example, if we want to filter out rows that Contain NULLs in the FIRST_NAME port, use the following condition:

IIF (ISNULL (FIRST_NAME), FALSE, TRUE)

This condition states that if the FIRST_NAME port is NULL, the return value is FALSE and the row should be discarded. Otherwise, the row passes through to the next Transformation.

Performance tuning:

Filter transformation is used to filter off unwanted fields based on conditions we Specify.

- Use filter transformation as close to source as possible so that unwanted data gets Eliminated sooner.

- If elimination of unwanted data can be done by source qualifier instead of filter,Then eliminate them at Source Qualifier itself.

- Use conditional filters and keep the filter condition simple, involving TRUE/FALSE or 1/0

- Active and connected transformation.

Example: If we want to keep employees of France, India, US in 3 different tables, then we can use 3 Filter transformations or 1 Router transformation.

Mapping A uses three Filter transformations while Mapping B produces the same result with one Router transformation.

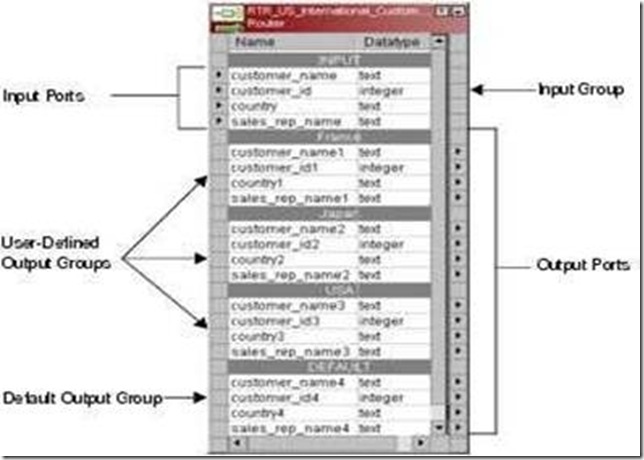

A Router transformation consists of input and output groups, input and output ports, group filter conditions, and properties that we configure in the Designer.

Working with Groups

A Router transformation has the following types of groups:

- Input: The Group that gets the input ports.

- Output: User Defined Groups and Default Group. We cannot modify or delete Output ports or their properties.

The Default Group: The Designer creates the default group after we create one new user-defined group. The Designer does not allow us to edit or delete the default group. This group does not have a group filter condition associated with it. If all of the conditions evaluate to FALSE, the IS passes the row to the default group.

Example: Filtering employees of Department 10 to EMP_10, Department 20 to EMP_20 and rest to EMP_REST

- Source is EMP Table.

- Create 3 target tables EMP_10, EMP_20 and EMP_REST in shared folder. Structure should be same as EMP table.

- Create the shortcuts in your folder.

1. Open folder where we want to create the mapping.

2. Click Tools -> Mapping Designer.

3. Click Mapping-> Create-> Give mapping name. Ex: m_router_example

4. Drag EMP from source in mapping.

5. Click Transformation -> Create -> Select Router from list. Give name and

Click Create. Now click done.

6. Pass ports from SQ_EMP to Router Transformation.

7. Edit Router Transformation. Go to Groups Tab

8. Click the Groups tab, and then click the Add button to create a user-defined Group. The default group is created automatically..

9. Click the Group Filter Condition field to open the Expression Editor.

10. Enter a group filter condition. Ex: DEPTNO=10

11. Click Validate to check the syntax of the conditions you entered.

12. Create another group for EMP_20. Condition: DEPTNO=20

13. The rest of the records not matching the above two conditions will be passed to DEFAULT group. See sample mapping

14. Click OK -> Click Apply -> Click Ok.

15. Now connect the ports from router to target tables.

16. Click Mapping -> Validate

17. Repository -> Save

- Create Session and Workflow as described earlier. Run the Workflow and see the data in target table.

- Make sure to give connection information for all 3 target tables.

Difference between Router and Filter :

We cannot pass rejected data forward in filter but we can pass it in router. Rejected data is in Default Group of router.

============================================================

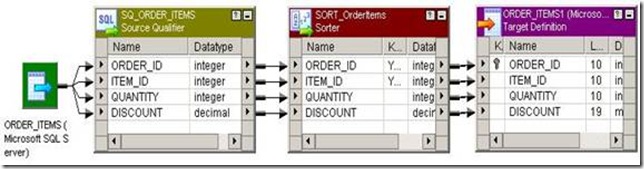

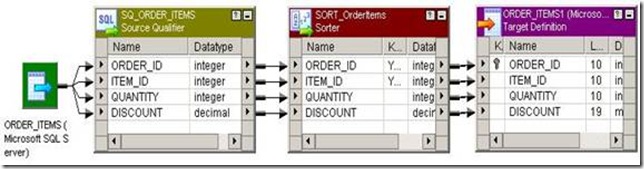

SORTER TRANSFORMATION

- Connected and Active Transformation

- The Sorter transformation allows us to sort data.

- We can sort data in ascending or descending order according to a specified sort key.

- We can also configure the Sorter transformation for case-sensitive sorting, and specify whether the output rows should be distinct.

The Sorter transformation contains only input/output ports. All data passing through the Sorter transformation is sorted according to a sort key. The sort key is one or more ports that we want to use as the sort criteria.

Sorter Transformation Properties

1. Sorter Cache Size:

The Power Center Server uses the Sorter Cache Size property to determine the maximum amount of memory it can allocate to perform the sort operation. The Power Center Server passes all incoming data into the Sorter transformation Before it performs the sort operation.

- We can specify any amount between 1 MB and 4 GB for the Sorter cache size.

- If it cannot allocate enough memory, the Power Center Server fails the Session.

- For best performance, configure Sorter cache size with a value less than or equal to the amount of available physical RAM on the Power Center Server machine.

- Informatica recommends allocating at least 8 MB of physical memory to sort data using the Sorter transformation.

The Case Sensitive property determines whether the Power Center Server considers case when sorting data. When we enable the Case Sensitive property, the Power Center Server sorts uppercase characters higher than lowercase characters.

3. Work Directory

Directory Power Center Server uses to create temporary files while it sorts data.

4. Distinct:

Check this option if we want to remove duplicates. Sorter will sort data according to all the ports when it is selected.

Example: Sorting data of EMP by ENAME

- Source is EMP table.

- Create a target table EMP_SORTER_EXAMPLE in target designer. Structure same as EMP table.

- Create the shortcuts in your folder.

1. Open folder where we want to create the mapping.

2. Click Tools -> Mapping Designer.

3. Click Mapping-> Create-> Give mapping name. Ex: m_sorter_example

4. Drag EMP from source in mapping.

5. Click Transformation -> Create -> Select Sorter from list. Give name and click Create. Now click done.

6. Pass ports from SQ_EMP to Sorter Transformation.

7. Edit Sorter Transformation. Go to Ports Tab

8. Select ENAME as sort key. CHECK mark on KEY in front of ENAME.

9. Click Properties Tab and Select Properties as needed.

10. Click Apply -> Ok.

11. Drag target table now.

12. Connect the output ports from Sorter to target table.

13. Click Mapping -> Validate

14. Repository -> Save

- Create Session and Workflow as described earlier. Run the Workflow and see the data in target table.

- Make sure to give connection information for all tables.

![clip_image002[5] clip_image002[5]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEhRW_4rfTV7LxWHpf8WCb3xRGfiP_4SDcVE4V4zZOUO_eERk1VNoBzgN298Yxgc4iZMxPPm6K9TVRX6yse1CjyRlR-hgb8wwVtVjgxb32JCLEutQ_i5F04WEUj9vUMQblyAQ1DN3BjH6b4R/?imgmax=800)

Performance Tuning:

Sorter transformation is used to sort the input data.

- While using the sorter transformation, configure sorter cache size to be larger than the input data size.

- Configure the sorter cache size setting to be larger than the input data size while Using sorter transformation.

- At the sorter transformation, use hash auto keys partitioning or hash user keys Partitioning.

- Active and connected transformation

During the session, the Power Center Server caches input data until it can perform The rank calculations.

Rank Transformation Properties :

- Cache Directory where cache will be made.

- Top/Bottom Rank as per need

- Number of Ranks Ex: 1, 2 or any number

- Case Sensitive Comparison can be checked if needed

- Rank Data Cache Size can be set

- Rank Index Cache Size can be set

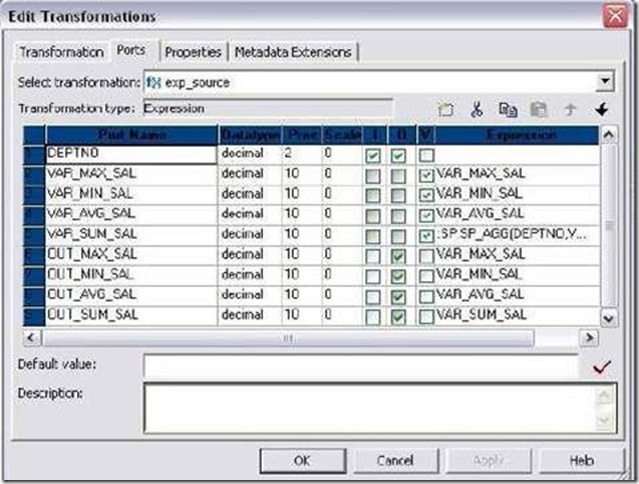

| Ports | Number Required | Description |

| I | 1 Minimum | Port to receive data from another transformation. |

| O | 1 Minimum | Port we want to pass to other transformation. |

| V | not needed | can use to store values or calculations to use in an expression. |

| R | Only 1 | Rank port. Rank is calculated according to it. The Rank port is an input/output port. We must link the Rank port to another transformation. Example: Total Salary |

Rank Index

The Designer automatically creates a RANKINDEX port for each Rank transformation. The Power Center Server uses the Rank Index port to store the ranking position for Each row in a group.

For example, if we create a Rank transformation that ranks the top five salaried employees, the rank index numbers the employees from 1 to 5.

- The RANKINDEX is an output port only.

- We can pass the rank index to another transformation in the mapping or directly to a target.

- We cannot delete or edit it.

Rank transformation allows us to group information. For example: If we want to select the top 3 salaried employees of each Department, we can define a group for Department.

- By defining groups, we create one set of ranked rows for each group.

- We define a group in Ports tab. Click the Group By for needed port.

- We cannot Group By on port which is also Rank Port.

- EMP will be source table.

- Create a target table EMP_RANK_EXAMPLE in target designer. Structure should be same as EMP table. Just add one more port Rank_Index to store RANK INDEX.

- Create the shortcuts in your folder.

- Open folder where we want to create the mapping.

- Click Tools -> Mapping Designer.

- Click Mapping-> Create-> Give mapping name. Ex: m_rank_example

- Drag EMP from source in mapping.

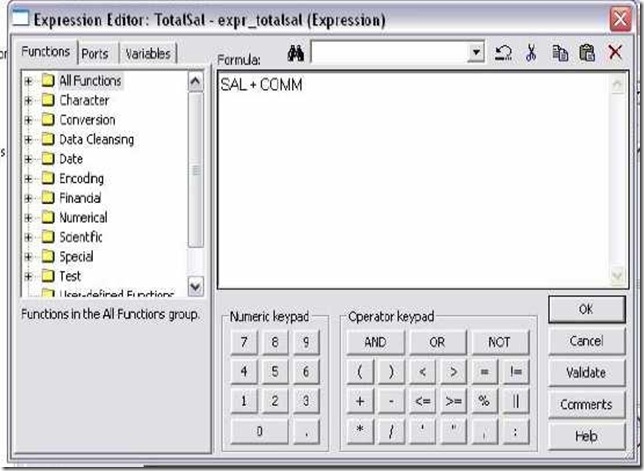

- Create an EXPRESSION transformation to calculate TOTAL_SAL.

- Click Transformation -> Create -> Select RANK from list. Give name and click Create. Now click done.

- Pass ports from Expression to Rank Transformation.

- Edit Rank Transformation. Go to Ports Tab

- Select TOTAL_SAL as rank port. Check R type in front of TOTAL_SAL.

- Click Properties Tab and Select Properties as needed.

- Top in Top/Bottom and Number of Ranks as 5.

- Click Apply -> Ok.

- Drag target table now.

- Connect the output ports from Rank to target table.

- Click Mapping -> Validate

- Repository -> Save

- Create Session and Workflow as described earlier. Run the Workflow and see the data in target table.

- Make sure to give connection information for all tables.

- Open the mapping made above. Edit Rank Transformation.

- Go to Ports Tab. Select Group By for DEPTNO.

- Go to Properties tab. Set Number of Ranks as 2.

- Click Apply -> Ok.

- Mapping -> Validate and Repository Save.

RANK CACHE

Sample Rank Mapping

When the Power Center Server runs a session with a Rank transformation, it compares an input row with rows in the data cache. If the input row out-ranks a Stored row, the Power Center Server replaces the stored row with the input row.

Example: Power Center caches the first 5 rows if we are finding top 5 salaried Employees. When 6th row is read, it compares it with 5 rows in cache and places it in Cache is needed.

1) RANK INDEX CACHE:

The index cache holds group information from the group by ports. If we are Using Group By on DEPTNO, then this cache stores values 10, 20, 30 etc.

- All Group By Columns are in RANK INDEX CACHE. Ex. DEPTNO

It holds row data until the Power Center Server completes the ranking and is Generally larger than the index cache. To reduce the data cache size, connect Only the necessary input/output ports to subsequent transformations.

- All Variable ports if there, Rank Port, All ports going out from RANK Transformations are stored in RANK DATA CACHE.

- Example: All ports except DEPTNO In our mapping example.

No comments:

Post a Comment